Understanding How Google Algorithm Updates Affect Your SEO Campaigns

By: Rank Media

Panda. Penguin. Hummingbird. Pigeon. What do these names of animals have to do with search engine optimization?

To the inexperienced marketing professional, it may sometimes sound like Google runs a zoo. However, these names refer to algorithm updates the search engine giant has rolled out over the past five years. These updates are developed in a constant effort to increase the relevancy of search queries you make to the results they list in SERPs (also known as “Search Engine Results Pages”). These changes to Google’s ranking algorithm and core engine also came about as a way to penalize what we in the industry call “black-hat” techniques. Back in the day, it was easy for webmasters and marketing gurus to circumvent Google’s guidelines, cheat the system, and achieve rankings quickly. However, search engine optimization has evolved quite a bit since then, with Google penalizing questionable tactics and relying more on relevancy, content, and trust factors to rank websites accordingly.

While Google regularly releases updates to its algorithm, there have been a few significant changes that all digital marketing professionals should fully comprehend before embarking on organic search and content marketing campaigns.

*Note: this post was updated January 5th, 2018 to reflect new algorithm updates:

1. Panda

Date: February 24, 2011

Percentage of Queries Affected: 12%

What the update targeted: Black-hat tactics such as duplicate content, thin content, keyword stuffing, and spam.

Launched in early 2011, Panda was a significant update from Google that aimed at improving the search visibility for high-quality sites, while decreasing visibility or even penalizing sites that were considered low-quality. This update targeted sites that had any of the following:

- Thin or low-quality content.

- Too many advertisements (essentially a high ad-to-content ratio).

- Duplicate content from other websites.

- Duplicate content WITHIN the same site (i.e., same product description for 1,000 products pages that differ only in color and size).

- Website content that provides little-to-no value for users.

The objective here is to reward websites that invested in optimizing the user experience and writing original content. In fact, news websites benefited greatly from this update, as their core focus was producing fresh and unique content on an ongoing basis. Additionally, this update focused on promoting sites that were deemed more trustworthy and relevant in the eyes of users. Google even provided webmasters a guideline on the factors to consider when determining what counts as a high-quality site. Some of these include:

- Is the information within the article trustworthy?

- Is there duplicate and redundant articles with slight keyword changes throughout the website?

- Is the content relatively thin and lacking substance?

- Would the average user complain when seeing pages from the website?

While this update proved to be quite significant in the long run, webmasters and black-hat SEO “gurus” were still cheating the system by employing dirty tactics…..which led to Google’s next significant update.

2. Penguin

Date: April 24, 2012

Percentage of Queries Affected: 3.1% of English Queries / 4% Overall

What the update targeted: Toxic backlinks, spammy anchor text in backlinks, irrelevant backlinks.

Also known as the “Webspam Update“, this major algorithm update targeted websites that relied on “over-optimization” tactics that violated Google’s Webmaster Guidelines. More specifically, this update targeted webmasters that utilized black-hat SEO strategies, such as the following.

- Cloaking: this is the process of showing different content to Google and pushing users to a separate page after they clicked on the original search result. Cloaking is seen as deceptive and hurts the overall user experience.

- Link Schemes: targeting link exchanges, excessive linking, and link purchasing, the Penguin update penalized sites that used any one of these spam/black-hat techniques to increase overall link popularity.

- Keyword Stuffing: the Penguin update essentially made the keyword meta tag irrelevant – webmasters used to flood these tags with keywords to cheat the system, but once Google caught on, they rendered this tactic useless.

- Hidden Text: deceptive, hidden text is only visible to bots crawling webpages – which is seen as a tactic to cheat the system in Google’s eyes once again.

The reason why these tactics are deceptive and subject to penalties is that they artificially boost the importance of a web page without providing any value to the end user. In particular, keyword stuffing and hidden text was something webmasters loved because they were able to give a website “organic search juice” without compromising the overall design. With regards to over-optimizing content, webmasters would excessively use keywords within content, providing no value to anyone except spiders crawling the website (and I’m confident if the bots crawling the site were conscious, they would find the content boring, too).

Overall, the update was necessary as too many websites were able to utilize article spinning and site manipulation to fast-track their way to the top of SERPs. Google also sought to eliminate over-linking, which was the death knell for search engine optimization strategies that relied upon building links from blog comments, author archives, and re-spun articles. While there weren’t a significant amount of search queries affected by this update, it set a precedent and reinforced guidelines to ensure the highest quality of search results.

3. Hummingbird

Date: August 22, 2013

Percentage of Queries Affected: 90% of searches worldwide.

What the update targeted: Websites with low-quality content, keyword stuffing, and other blackhat tactics.

Google’s most significant update since Caffeine, Hummingbird was launched towards the end of summer 2013. This update proved to be significant as it completely revamped Google’s search algorithm, which also changed the dynamic of managing strategic SEO. Rather than serving results based on keywords alone, Google introduced the concept of semantic search, which focuses on the user intent and context of a search. For marketing professionals and SEO gurus alike, this meant adjusting organic search strategies to focus on delivering great content consistently, with the intent based on educating users and answering questions. Gone were the days of optimizing a page with mediocre content focused on one keyword and replicating that strategy across the site. Now, webmasters and website owners were more inclined to cater content towards search queries that answer questions, incorporating the usage of long-tailed keywords within the material.

While not a seismic shift with regards to strategic search engine optimization, the core focus of optimizing onsite content had additional elements. Maintaining an optimal keyword density is still essential, but the new age of SEO requires adding LSI (latent semantic indexing) match keywords within website content to become globally optimized. For example, optimization for the keyword “search engine optimization” may entail incorporating the following LSI matches within the content:

- Organic Search

- Website Optimization

- Content Marketing

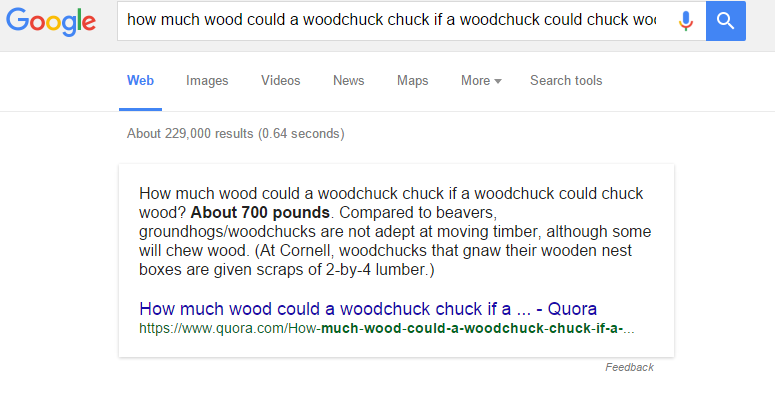

Additionally, while the Knowledge Graph had been around since 2012, the Hummingbird update gave it a bit more juice for users. Google’s goal is to serve the most relevant results and in most cases, answers to users’ questions. In the example below, you can see that Google doesn’t bold keywords related to my query, but rather the answer I’m looking for to the age-old question relating to woodchucks chucking wood:

Although the Knowledge Graph is the bane of some webmasters due to its ability to serve answers directly within the search results (thereby preventing users from seeing their websites below), it gives users access to information in a quick and timely manner. Additionally, the Knowledge Graph offers users access to a myriad of tools depending on the search queries, ranging from calculators & Google translate to local weather and metric conversion widgets.

Going back to the Hummingbird update, this forced organic search professionals to adopt updated practices for optimizing websites, changing the game of SEO forever.

4. Pigeon

Date: July 24, 2014

Percentage of Queries Affected: Varies according to industry (see stats here: How Google Pigeon Impacted Local Queries, and What You Can Do)

What the update targeted: Low-quality onsite and offsite SEO (for example, directories).

Initially rolled out in the United States for English queries in July 2014, Pigeon was a local search algorithm update that gave a preference to local search results. According to Google, the goal of this update was to provide a more relevant and customized experience for users searching for local products and services. As a result, significant directories such as Yelp and industry-specific directories, such as TripAdvisor and Urbanspoon, saw higher visibility in search for localized queries. In fact, this update resolved a dispute between Yelp and Google, where the primary local review site accused the search giant of listing its reviews ahead of Yelp’s results, even when users had inputted queries that included the word “yelp.”

Google also stated that this update would improve their “distance and location ranking parameters” with regards to local SEO. While the statement itself is ambiguous, this translates to serving better results to users that search for queries related to neighbourhoods. With regards to local search, neighbourhoods are known as “informal space, which means there are no official boundaries in some cases. Depending on where you were and which name you used for a neighbourhood (specific term or colloquial term), you may have gotten completely different results in the past. Aiming to resolve these issues and provide users with relevant results, the updated algorithm now provides more specific results. Additionally, the Pigeon update makes it easier for Google to understand where you are searching from and serve results from outside of the region/neighbourhood depending on your vicinity to those local businesses. Overall, this update benefits websites that optimize for local search, which includes the following:

- Claiming and optimizing business on Google My Business.

- Ensuring NAP (Name, Address, Phone Number) is consistent across the web.

- Optimizing website in niche, local directories (don’t overdo it with “FREEXYZFORSEO” directory listings though – that will just send Google a signal that you’re inflating your backlink profile).

5. Mobile Update – AKA “Mobilegeddon”

Date: April 21, 2015

Percentage of Queries Affected: N/A

What the update targeted: Websites without mobile-friendly pages and pages with mobile usability issues.

An afterthought years ago, mobile design had become increasingly important in the past couple of years due to the surge in users utilizing mobile devices to access the Internet. Starting with the addition of “mobile-friendly” labels in November 2014, it was clear that Google was heading in the direction of launching a significant organic search update to reward sites that optimized the user experience on all devices. In early 2015, Google started sending out mass-emails to webmasters regarding mobile usability errors, subsequently announcing that they would be launching a mobile algorithm update on April 21.

While websites that didn’t render well on mobile devices already felt the pain on mobile SERPs, this update made mobile usability a significant ranking factor, which was terrible news for website rankings on desktop and tablets. Additionally, webmasters needed to ensure that they had the following on their website to avoid losing any traction on Organic Search:

- Lack of Flash and any other elements that don’t work well on mobile devices.

- Readable text that does not require users to zoom.

- Content adapted to the size of the screen.

- Links far apart from each other to ensure that users don’t accidentally click on the wrong link.

To make the transition easy for webmasters, Google provided full documentation on their website relating to optimizing sites for mobile, including guides specific to popular platforms such as WordPress & Magento. To test the mobile-friendliness of particular web pages, webmasters and marketers alike can use Google’s online tool here: https://www.google.com/webmasters/tools/mobile-friendly/

6. RankBrain

Date: October 26, 2015

Percentage of Queries Affected: N/A

What the update targeted: Low-quality content, poor user experience, pages without query-specific content.

For those of you that avidly read articles related to Google and search engine optimization, the name RankBrain may have come up recently. While not a new algorithm update, it went live earlier this year and is an element of the Hummingbird engine. According to Google, this is a machine-learning AI system that is used to help process a massive amount of search results each day.

Refinement of search results has traditionally flowed back to elements of human programming. RankBrain was developed to better assist with classifying results based on the search queries entered. It is estimated that around 15% of search queries written every day have never been processed by Google before, which is kind of crazy when you think about it for a minute. That means 15 out of every 100 searches on Google is related to something the world’s most famous search engine has never processed. RankBrain was developed to handle significant amounts of data, better interpret queries, and effectively translate them to provide the most accurate results to users.

Does this mean search engine marketing professionals need to change the way we optimize websites? Of course not…unless you are still relying on outdated and ineffective tactics. Investing in original content creation, both onsite and offsite, is your best bet to rank organically in the long run, in addition to maintaining all technical aspects of your website.

7. Possum

Date: September 1, 2016

Percentage of Queries Affected: N/A

What the update targeted: Local listings and relevance to the searcher’s location.

Unlike some of the other updates listed in this post, the Possum update focused purely on local listings found within the 3-pack and Local Finder on Google. While this was the most significant local search update since Pigeon rolled out in 2014, the Possum update tweaked the algorithm even more to deliver even better results. A quick summary of the update includes:

- Increased search presence for businesses located outside the physical area of a city/region. While the Possum update made it more likely for companies within proximity of the searcher to appear in local results, highly reputable and relevant businesses located outside the physical limits of a specified city saw an increase in impressions.

- Stronger filters were applied to detect duplicate listings and remove them from local search results. A well-known black-hat tactic among search engine marketing professionals (I use that term lightly – more like “cheaters” rather than “professionals”) is to create multiple listings using the same address or slight variations to dominate search results for local terms. As mentioned in a blog post from two years ago, I discovered a particular competitor utilizing this tactic, which did not end so well for them (the listings are no longer active).

- The physical location of a user had a stronger impact on search results. While the distance between a user and relevant businesses was always a factor, the Possum update made location a stronger ranking factor in search results.

- Slight variations in the way a query appears on search results provided different results. For example, the keyword “internet marketing company,” “internet marketing agency,” and “internet marketing” produced slightly different results, even though I was searching for the same thing from a specific location (you can see more about those tests here).

8. Fred

Date: March 8, 2017

Percentage of Queries Affected: N/A

What the update targeted: Low quality and thin content.

Although Google routinely updates its algorithm to penalize low-quality content, it seemed like a lot of websites fell under the radar and were able to generate rankings despite having awful content. Fed up with sites spamming search results, Google unleashed Fred, an update that targeted websites violating Google’s Webmaster guidelines.

Unless you were employing black-hat tactics, you had nothing to worry about when this update launched. However, webmasters that engaged in the following tactics saw their rankings decrease considerably:

- Building low-quality links from private blog networks.

- Publishing affiliate-heavy and ad-centered content that provided no value.

- Over-optimizing keywords within website content.

- Utilizing gateway pages to push affiliate links.

- Prioritizing revenue generation from ads over helping users find information.

In a report from SEO Round Table, many of the websites affected by this update saw a 50% or higher decrease in organic traffic from Google, representing a significant blow to those sites’ ability to generate revenue. When it comes to search engine optimization, you need to play by the rules set forth by Google and Bing or suffer the consequences.

Summary

A lot of information to process…right? Don’t worry – all us search marketing professionals are used to it as Google, and other search engines for that matter, update their core algorithms on a consistent basis. Earning trust with search engines take time, which is why SEO is a long-term play and not a 100-metre dash. These algorithm updates are intended to serve users the most relevant content possible, while at the same time penalizing websites that don’t take the time to invest in building trust and authority with search engines.

Now that you understand how each of these significant algorithm updates affects SEO, are you ready to take your marketing to the next level?

Panda/Penguin Image courtesy of StateOfDigital.com

RankBrain Image courtesy of Search Engine Land

(800) 915 7990

(800) 915 7990